Building an AI Translation Demo with Claude

Rapid validation through a demo during the requirements phase helps clarify how a feature should work and guide subsequent optimization.

Recently, I have been working on requirements for AI translation and wanted to know: What limitations does AI translation have? Which model delivers the best quality and the fastest speed? In the past, we might only have been able to try competing products, but now we can use Claude to quickly build a demo and validate ideas.

At the same time, I also used this demo to optimize the speed and quality of AI translation, providing preliminary experience and practice for development, which is more conducive to later planning.

In this demo, the translation architecture went through a full iteration process: from serial execution, to concurrent requests, then to stable multi-segment JSON output, and finally to JSON streaming output.

This change significantly reduced the overall time required for long-text translation from the initial 26s to around 2.7s. Although there is still a gap compared with traditional engines in terms of absolute speed, with multi-key polling and caching mechanisms, the experience already has plenty of room for further improvement.

In addition, after repeated testing, I also worked out a set of parameter combinations that balance quality and stability.

If you want to understand the specific problems and solutions, please keep reading:

Core Engineering Optimizations

To address the inherent problems of AI translation being “slow” and prone to “hallucination,” I validated strategies in three directions in the demo code:

1. Response Experience: Concurrency Limit Optimization

At present, choosing a fast model can improve speed to some extent, but compared with traditional translation, there is definitely still a gap. So I tried optimizing the request logic to deliver a relatively good translation experience while balancing model speed and concurrency limits:

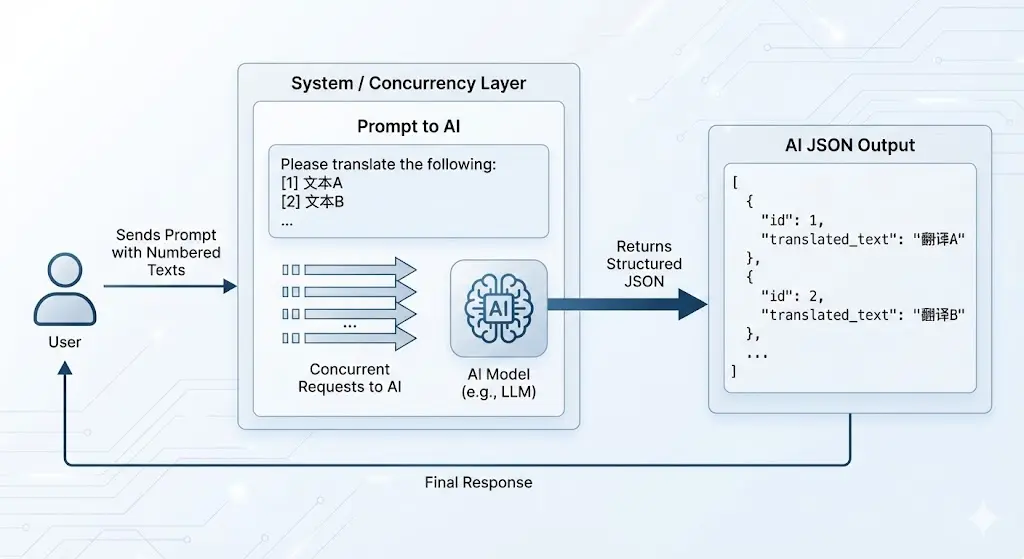

Concurrent requests are certainly the first step everyone would think of. That’s right—this does provide a considerable boost to translation speed.

After optimization, the time required for the same text dropped from 24s to 6s, and the improvement was indeed obvious. But compared with the 1s of traditional translation, the gap still cannot be ignored.

Can it be even faster? The answer is yes.

The previous strategy was simple concurrency, meaning “one text segment = one request.” Since the model supports long context, we can completely upgrade this to packaged sending: within the Token limit, merge multiple text segments into the same request. This makes full use of the context window and allows more content to be processed at once, greatly improving efficiency.

A new challenge follows: when multiple segments are packaged and sent together, how can the returned translations be accurately mapped back to each original segment?

To solve this alignment problem, I adopted a strategy of paragraph numbering + JSON structured output. By adding an index to each text segment and forcing the AI to return structured JSON data, multiple segments can be translated simultaneously while keeping the order stable.

After switching to JSON, streaming output was gone, which was also unacceptable. This led me to discover the concept of NDJSON, which can enable streaming output of JSON data.

After this round of optimization, the AI translation time was reduced from 6.08s to 2.88s.

That’s all for optimizing AI translation speed. Combined with multi-key polling and translation caching that can be implemented later on the server side, the experience can be further improved.

2. Translation Quality: Prompt Tuning and Terminology Protection

I referred to Immersive Translate’s Web3-domain translation prompt and clarified the output specifications. It requires retaining professional terms (such as ETH, Gas fee, etc.), and also ensures consistent output order during batch translation through an indexed input format ([1] text... [2] text...), avoiding the problem of multiple segments being returned out of order.

You are a professional ${targetLang} native translator specialized in Web3 and blockchain content.

## Translation Rules

1. Output only the translated content, without explanations or additional text

2. Maintain all blockchain terminology, cryptocurrency names, and token symbols

in their original form (e.g., ETH, BTC, USDT, Solana, Ethereum)

3. Preserve technical Web3 concepts with commonly accepted translations:

DeFi, NFT, DAO, DEX, CEX, AMM, TVL, APY, APR, gas fee, smart contract,

wallet, staking, yield farming, liquidity pool

4. Keep all addresses, transaction hashes, and code snippets exactly as in the original

5. Translate from ${sourceLang} to ${targetLang} while preserving technical meaning

6. Ensure fluent, natural expression in ${targetLang} while maintaining technical accuracy3. Parameter Control

Although formal development had not yet started during the requirements phase, I rehearsed the future control logic at the demo level, mainly covering two dimensions:

Token Limits and Intelligent Chunking

Based on the debugging results, I applied certain controls to the number of segments and character length in the same request:

- Temperature = 0 - Deterministic output, more stable results

- Control the number of paragraphs (4 segments) - Avoid timeout caused by overly large single requests

- Maximum text characters per request (3,000) - Balance output speed and content length

Thinking Mode Limitation

For models with thinking capabilities, such as Gemini 3.0 Flash, adjusting thinkingLevel can also improve translation speed in a clear-cut task like translation.